Linescan Camera Processing

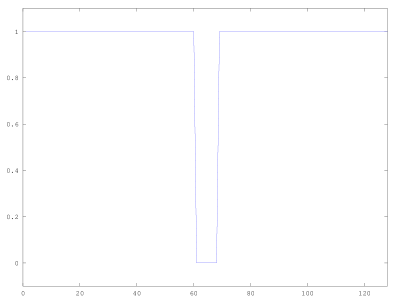

One recent project that I have been working on is a line following car. A TSL1401-DB linescan camera allowed the car to see the track. Each scanline of data consisted of 128 pixels, which correspond to the amount of visible light. At first this seems like it would be trivial to obtain the location of the line. It would seem safe to assume that a flat profile with a small dip for the black line would be visible from the camera. If that holds true, an inversion and weighted average should give the location of the line. <!-- :truncate: -→

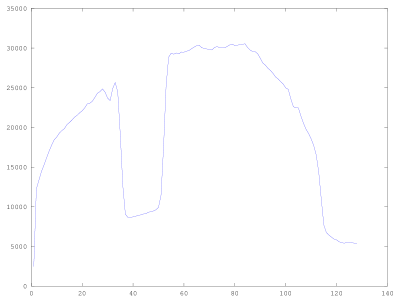

Unfortunately the data that came off the camera was not ideal due to one non-linearity. The test data shown in Fig. 2 was generated by aiming the camera at a low contrast test track. This track consisted of electrical tape on a uniform gray surface.

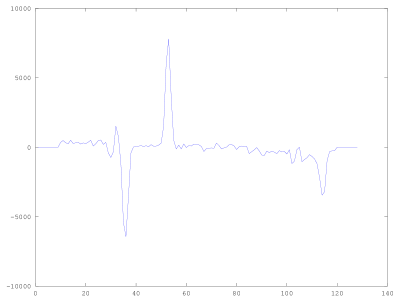

Looking at the above data, it is still clear that there is a line within view of the camera. One could characterize it as local minimum or a region below a threshold when the edges are ignored. Another approach is to recognized the line from the sharp edges that it produces in the profile. By exploiting these edges, it is not too difficult to find the approximate location of the line. Taking the derivative of the linescan data yields Fig. 3.

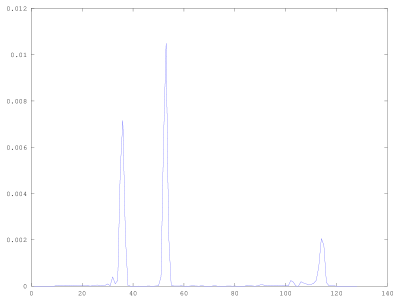

After stripping away some of the edge values, taking the absolute value, and normalizing, Fig. 4 was produced.

This data only needs a weighted sum to find the position of the observed line. While this method works quite well at finding a line within the view of the camera, it does a poor job at identifying if there is a line actually there. By looking at how the energy of the linescan is spread in Fig. 3 & 4, a threshold can be set to determine if a line is in view, but for discriminating one line from another requires more information to classify the input.

In practice this method worked, but only when the car had little chance of losing the line. When the line was lost, better discrimination and basic heuristics were needed to permit sticky steering.